DBeaver is capable of loading the database, but will not render the exception field, instead throwing SQL Error: Unsupported result column type STRUCT("name" VARCHAR, properties STRUCT(message VARCHAR)). In addition, support for the STRUCT data structure in Parquet is iffy. This has immediate implications as Tad is not capable of loading newer DuckDB files.

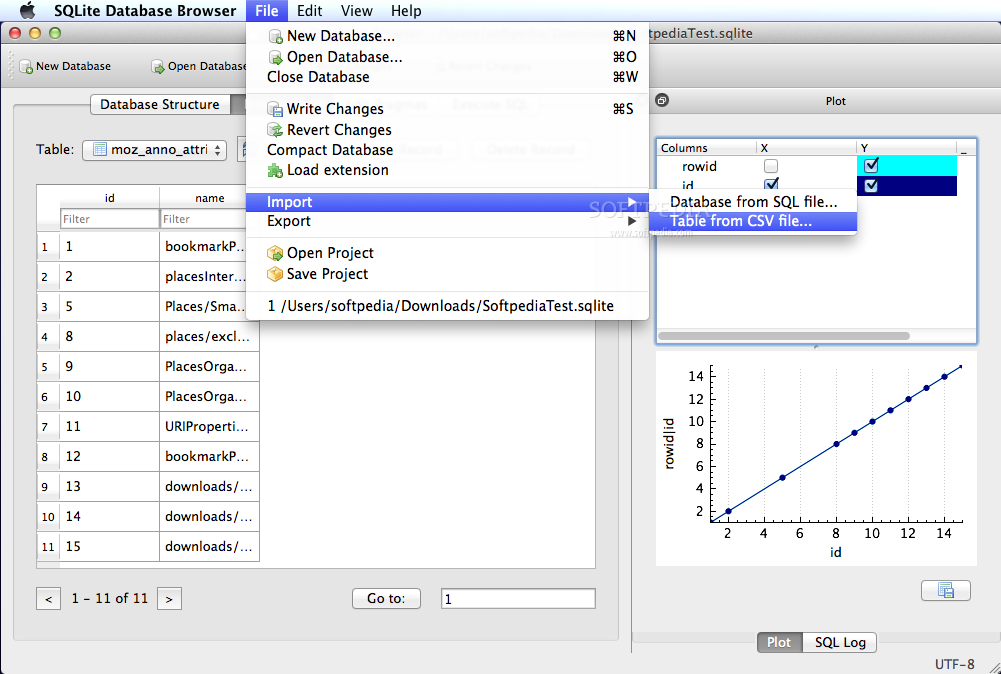

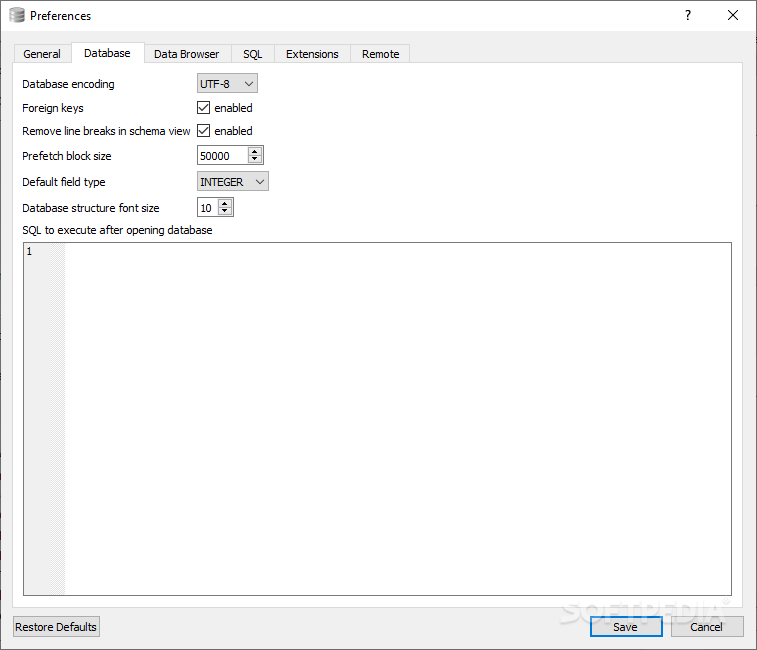

While DuckDB has advantages over SQLite, storage is not stable – newer versions of DuckDB cannot read old database files and vice versa. Installation is a single binary zip file: There's a blog post with examples – let's try it out on logs and see what happens. That's not a problem with the new version: as of DuckDB 0.7.0, DuckDB can read NDJSON files in directly and infer a schema from the values. I haven't used duckdb extensively as it requires that a schema is defined before you import. DuckDB is like SQLite, but focused on analytics – it focuses on processing entire columns at once, rather than a row at a time. SQLite does have some disadvantages in that it processes rows sequentially, and so asking it aggregate or analytical questions like "what are the 10 most common user agent strings" can take a while on large datasets. lines_nofs0 which would provide a web application UI for Sqlite, but I haven't tried this. Interestingly, sqlite-lines can be used with Datasette with datasette data.db -load-extension. Saving the table and exporting it to your local desktop is also very simple, and gives you the option of using a database GUI like DB Browser for SQLite. Adding the sqlite-lines extension is as simple as getting the static library: Using sqlite3 can be more convenient than using jq or other JSON processing command line tools for digging around in logs. The benchmarks show that parsing NDJSON directly using sqlite-lines is far faster than using Python, but I would take the benchmarks with a grain of salt when it comes to duckdb, as he is using an older API there.

sqlite-linesĪlex Garcia released sqlite-lines in June specifically to read NDJSON. TL DR: With NDJSON support, slurping structured logs into a "no dependencies" database like SQLite or DuckDB is easier than ever. What we really want is an in-process "no dependencies" database that you can easily download that can work with NDJSON as a native datasource. Spark is great at NDJSON dataframes, but Spark is a heavyweight solution that we can't just install on a host. As such, getting NDJSON into a database and getting an actual schema is still a somewhat manual process compared to CSV. The de-facto standard for structured logging is newline-delimited JSON (NDJSON), and there is only a loose concept of a "schema" – structured logging can have high cardinality, and there's usually only a few guaranteed common fields such as timestamp, level and logger_name. Using SQL has a number of advantages over using JSON processing tools or log viewers, such as the ability to progressively build up views while filtering or querying, better timestamp support, and the ability to do aggregate query logic.īut structured logging isn't what most databases are used to. I've written a bit about querying structured logging with SQLite and the power of data science when it comes to logging by using Apache Spark. Structured logging and databases are a natural match – there's easily consumed structured data on one side, and tools for querying and presenting data on the other.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed